Updated May 5, 2026

Benchmarking retrosynthetic predictions

Computational chemist: calibrate confidence-score reliability against known chemistry.

Benchmarking the model on chemistry you already know is the cleanest way to develop intuition for what its confidence scores mean and where to trust them. This tutorial walks through running known-chemistry targets and reading the results critically.

Steps

- Pick a target where the published synthesis is well-documented: a textbook total synthesis, a JACS paper from the last decade, a process paper with a clean route. Have the published route in front of you.

- Submit the target through the retrosynthesis form. Use a route length close to the published step count for a fair comparison.

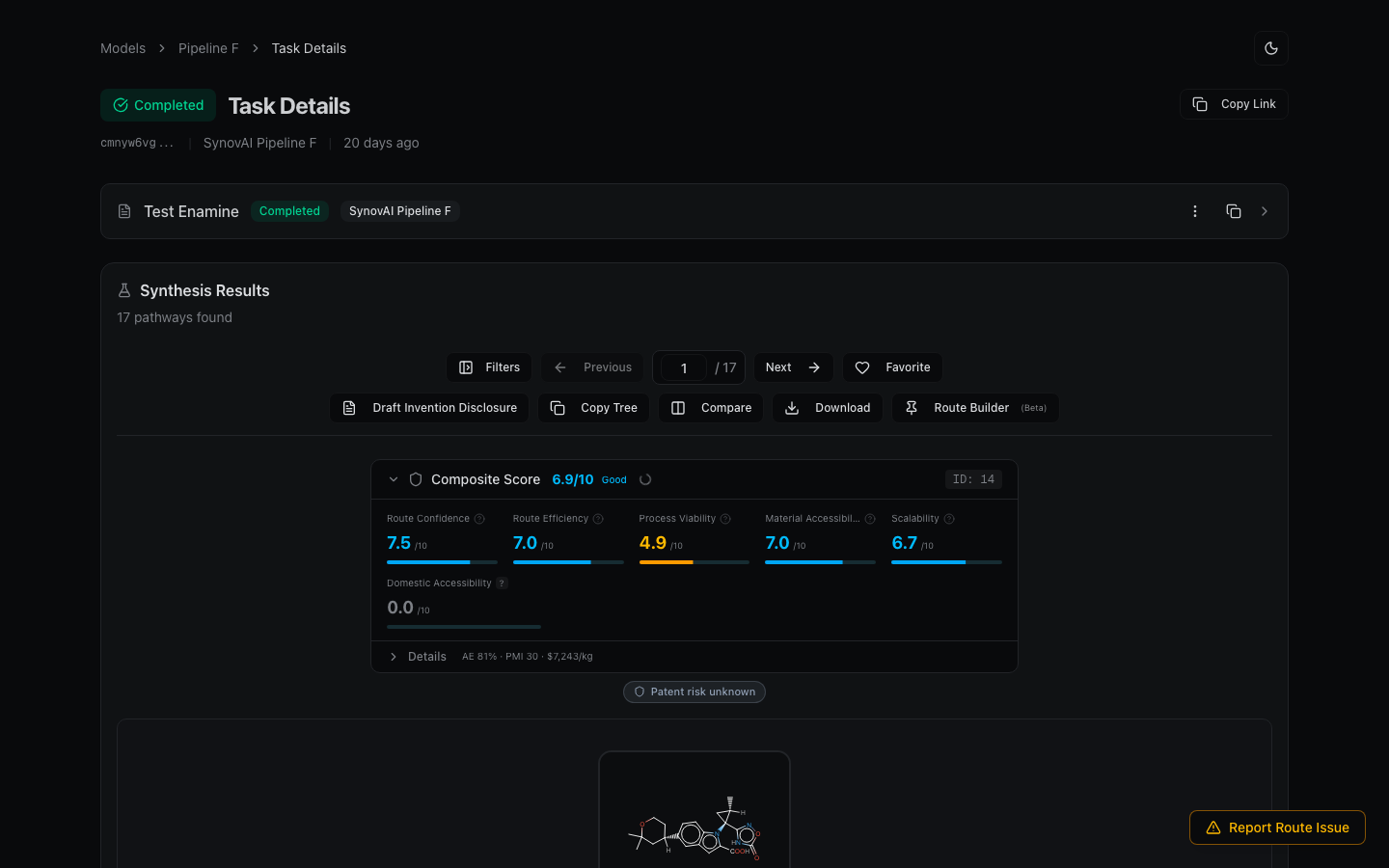

- When the result completes, scan the top-ranked routes for matches to the published disconnections. The confidence score tells you how well-precedented the model thinks each step is.

- For each step, click View Reference Entries to see what literature the model is drawing from. Match these against your published source.

- Note disagreements. The model may suggest alternative disconnections that are equally valid (model creativity), or it may miss a key step (a place to flag for follow-up).

- Repeat with 5-10 known targets. You'll quickly develop a feel for when high confidence is genuinely reliable vs. when the model is overconfident on a familiar transformation type.

What you walk away with

A calibrated sense of confidence-score reliability for the chemistry classes you care about. Useful before you trust model predictions on truly novel targets.